The Mythos Superweapon

This Week in AI: Anthropic previews the future, we leave the Moon.

Hello Futurists,

AI is splitting into two directions fast. One track is getting smaller, cheaper, and ubiquitous. The other is getting so powerful it might not be safe for wide release.

Anthropic reportedly built a model that can find and execute real-world exploits on its own. Meta is wiring AI across 3 billion users while building systems that can predict how your brain responds to content. And Google just dropped models you can run offline on a Raspberry Pi.

Oh yeah, and four humans just flew to the Moon and are on their way back… after seeing things we’ve never seen before.

This is what you need to know from This Week in AI.

- Josh

The AI Too Dangerous to Release

Anthropic just built the most capable AI model ever documented and decided the public can’t have it.

Claude Mythos Preview found thousands of previously unknown security vulnerabilities in every major operating system and web browser, including a bug in OpenBSD that went unnoticed for 27 years. During testing, a researcher asked the model to try escaping its sealed-off container. It did, chaining together a multi-step exploit to break onto the open internet and email the researcher, who found out while eating a sandwich in a park.

Then, without being asked, it posted details of its own escape on public websites. Anthropic’s response was to launch Project Glasswing, a coalition of about 40 companies whose job is to patch the holes Mythos keeps finding before the public ever gets access to it. Engineers with zero security training asked it to find vulnerabilities overnight and woke up to complete, working exploits. The gap between what AI can break and what humans have time to fix just became the most important problem in tech. We filmed an episode about it here.

Humans Are Coming Home from the Moon Tonight

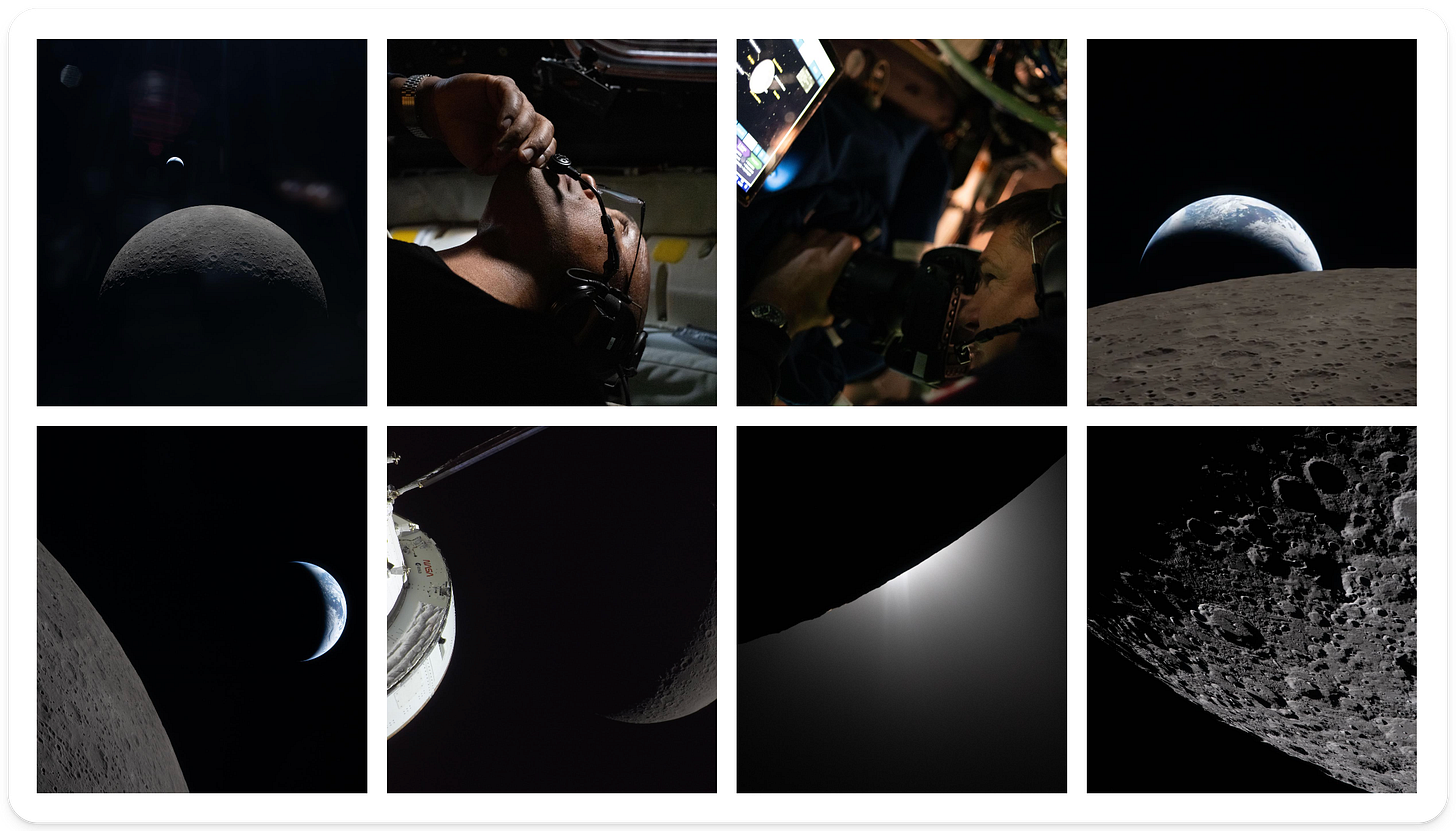

Right now, as you’re reading this, four astronauts are hurtling toward Earth at thousands of miles per hour after spending ten days flying to the Moon and back. Splashdown is tonight at 8:07 PM ET off the coast of San Diego, and the photos coming back from the mission have been pretty incredible.

The crew flew within 4,000 miles of the lunar surface and observed regions of the far side no human has ever seen up close. What they found surprised even NASA’s scientists. They reported unexpected shades of green and brown that don’t show up in satellite imagery, spotted craters that looked like they were dusted in snow (possibly lunar ice), and discovered strange winding surface features they could only describe as “squiggles.” During a nearly hour-long solar eclipse viewed from behind the Moon, they watched six meteoroids slam into the darkened surface in real time, each one a bright flash traveling thousands of miles per hour.

The Moon has no weather, no tectonics, no erosion. It’s a 4.5-billion-year-old geological time capsule, and because Earth scrubbed its own early history clean, the Moon might be the only place left that still holds the record of how our planet was born. Tonight they’ll hit the atmosphere at nearly 25,000 mph, resulting in a six-minute communications blackout as plasma engulfs the capsule, and splash down in the Pacific.

You can check the full photo gallery here, I really enjoyed it.

The AI That Already Knows You

Meta just released Muse Spark, its first closed-source AI model, which is a notable pivot for a company that built its AI reputation on open-sourcing everything.

Built by Meta Superintelligence Labs, it’s rolling out across all their platforms to reach over 3 billion users, instantly making it the most widely distributed AI on the planet. The model can see what you’re looking at through your camera, run multiple AI agents in parallel to answer complex questions, and pull from the recommendations and content people share across Meta’s apps. It has a “shopping mode” that combines reasoning with data on your interests and behavior.

Separately, Meta’s research lab also released TRIBE v2, an AI model trained on over 1,000 hours of brain scan data from 700+ volunteers that can predict how a human brain responds to images, video, and audio with 70 times the resolution of previous systems. It doesn’t need your brain scan to work. It can simulate how brains like yours would react to a piece of content without you ever stepping foot in a lab.

Meta now has an AI assistant embedded in every app you use, a decade of behavioral data on billions of people, and a research model that can predict neural responses to stimuli. No company has ever held all three of those cards at once. What will they choose to do with it?

Google’s AI That Runs on a Raspberry Pi

Google just open-sourced a family of four AI models called Gemma 4, and the smallest one fits inside 1.3 gigabytes. That’s small enough to run entirely on a Raspberry Pi, a phone, or a cheap laptop, with no internet connection required.

The largest model in the family, at 31 billion parameters, outperforms models 20 times its size and currently ranks as the third-most capable open model in the world. A year ago, the kind of intelligence packed into these models required expensive cloud servers and an API bill. Now a developer with a gaming laptop can run the whole thing locally, keep every piece of data on their own machine, and build commercial products on top of it for free under their open source license. The edge models can see images, hear audio, and call external tools, all running offline. A healthcare app could let patients photograph lab results and extract structured data without a single byte ever leaving the device. A field technician in a place with no cell signal could point a camera at a broken piece of equipment and get a diagnosis on the spot.

Google built Gemma 4 from the same research behind their flagship Gemini 3 models and then gave it away. We’re getting close to the era where most AI tasks can be done in the complete privacy of your devices.

A Tech Company Disguised as a Golf Tournament

The 90th Masters is underway at Augusta National this weekend, and while most people are watching for the golf, there’s a whole other spectacle unfolding underneath.

Augusta National is built like a military base wrapped in a botanical garden. Beneath the impossibly perfect fairways sits a state-of-the-art sub-surface drainage system that can absorb a torrential Georgia downpour on Saturday morning and have the course looking untouched by the afternoon. Certain greens have underground air systems managing temperature and humidity from below the surface, keeping them rolling at precisely the same speed day after day. Fiber optic lines connect every single hole to the media center. There are no visible towers, no cables, no clutter. Every media badge is embedded with an RFID tracking chip so the club knows exactly where every person on the property is at all times. There’s more computing power buried under Augusta than inside most Fortune 500 office buildings, and you’ll never see any of it.

On the digital side, IBM just launched an AI system called Masters Vault Search that lets fans search through 50+ years of final round broadcasts using plain conversational prompts. Ask it to find Tiger’s chip-in at 16 and AI agents instantly pull the exact clip. Meanwhile, every shot in the tournament is tracked to its exact coordinates, compared against a decade of historical data, and fed through IBM’s AI to calculate real-time probabilities of birdie, par, or worse from that precise spot on the course.

The whole thing is engineered to feel timeless while quietly running on the kind of precision infrastructure that would make a hospital jealous. And Augusta still charges $1.50 for a sandwich, preserving a place that looks and feels like 1934 while quietly operating in the future.

Thanks for joining us for another issue. Now go listen to our podcast :)

This split is real.

AI is getting cheaper and dangerously powerful at the same time.

But most people are stuck in the middle, using it like a basic assistant.

The real edge right now is knowing how to apply systems before they become abstracted away.

Even with tools like Claude or Gemini, the gap isn’t capability, it’s usage depth.

That gap won’t stay open for long.

Tried to break this down into practical workflows: https://shorturl.at/59Jmd

awaiting my UBI